In the current landscape of digital production, the pressure to “scale content” has led many teams to adopt generative tools with a singular focus on velocity. The promise is simple: take a static asset and turn it into a high-engagement motion piece in seconds. However, this rush toward speed frequently overlooks the fundamental necessity of creative control. When a workflow is optimized solely for throughput, the output often suffers from a lack of brand alignment, technical glitches, and a general loss of artistic intentionality.

The tension between speed and control is particularly visible in the transition from static imagery to motion. While the technology has advanced significantly, the “one-click” solution remains a myth for professional-grade output. Teams that treat generative AI as a vending machine—where a prompt goes in and a finished product comes out—quickly find themselves buried in “reroll” cycles that consume more time than traditional animation would have.

The Temptation of the Instant Asset

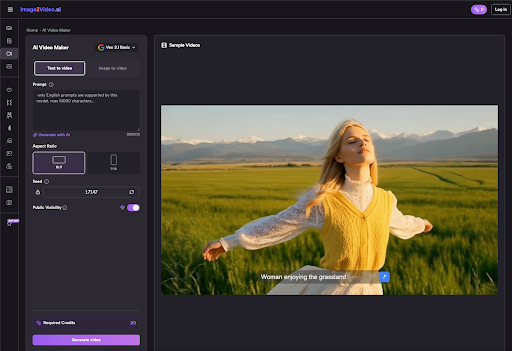

For a marketing team or a solo creator, the appeal of Photo to Video AI is obvious. It bypasses the need for complex keyframing, rigging, and physics simulations. In theory, you provide a high-quality product shot, and the engine interprets the lighting, depth, and texture to create realistic movement.

The mistake begins when teams equate “ease of use” with “predictability.” Many operators assume that if the initial image is perfect, the video will naturally follow suit. In practice, the generative process introduces variables that are often outside the creator’s immediate control. A small shift in a prompt or a slight variation in the model’s seed can result in a video that looks visually stunning but is functionally useless for the intended campaign. Speed, in this context, becomes a trap. If you can generate ten videos in ten minutes, but none of them accurately represent the brand’s motion guidelines, you haven’t actually saved any time.

Control vs. Chaos: The Image to Video Bottleneck

The core challenge of any Image to Video workflow is maintaining the integrity of the source material. When using Image to Video AI, the engine is essentially hallucinating the frames between the start and the finish. This is a massive computational feat, but it is also where the “chaos” enters.

Common failures in speed-first workflows include:

Anatomical and Structural Drift: The AI understands the pixels of the source image but may not understand the underlying physics. A human arm might turn into a fluid shape, or a solid product container might warp during a pan.

Temporal Inconsistency: The lighting in frame one may not match the lighting in frame sixty. This “flicker” is a hallmark of unrefined AI video and is a clear indicator of a workflow that prioritized speed over technical oversight.

Loss of Brand Identity: If a team is moving too fast to fine-tune motion parameters, the AI defaults to its own “trained” style, which might be overly cinematic or “dreamy” for a corporate or clean aesthetic.

The Quality of the Initial Asset

A frequent pitfall is the belief that the AI can “fix” a mediocre starting point. In the realm of Photo to Video, the input image acts as the blueprint. If the blueprint is cluttered, low-resolution, or lacks clear depth cues, the resulting video will likely exhibit artifacts. Teams often spend hours trying to prompt their way out of a problem that could have been solved by choosing a better source image.

Control starts before the “Generate” button is ever pressed. It involves selecting images with high contrast, clear subjects, and minimal background noise. When speed is the priority, these pre-production steps are skipped, leading to a “garbage in, garbage out” cycle that frustrates creative directors and stakeholders alike.

Why Speed Often Costs More in the Long Run

The “Speed Paradox” is that the faster you try to work with generative AI, the more human hours you often end up spending. This is largely due to the lack of a standardized feedback loop. In traditional video editing, if a movement is too fast, you adjust the keyframe. In a generative workflow, if the movement is too fast, you might have to re-prompt the entire scene, hoping the AI understands “slower” the same way you do.

There is also a significant degree of technical uncertainty. For instance, current models often struggle with complex interactions, such as hands grasping an object or liquid being poured. If a team builds a workflow around these specific actions without realizing the current limitations of the physics engines, they will waste days attempting the impossible. Acknowledging that the technology cannot yet reliably simulate high-viscosity fluids or intricate finger movements is a form of control. It allows the team to pivot to a different creative concept rather than fighting the tool.

Technical Uncertainty and Temporal Instability

We must also address the fact that many of these tools are “black boxes.” While we can influence the output through prompts and motion brushes, we do not have a granular “edit” function for the internal weights of the model. This means that a workflow that worked yesterday might produce different results today if the model has been updated or if the server load affects the generation process.

This uncertainty requires a buffer. A team optimized for speed rarely accounts for the 20% of “broken” generations that are inherent to the medium. Without this buffer, deadlines are missed, and the “fast” workflow becomes a liability.

Building a Controlled Pipeline

To move away from the speed trap, teams need to implement a “Control-First” architecture. This doesn’t mean moving slowly; it means moving deliberately.

- Segmented Generation: Instead of trying to generate a 10-second clip in one go, successful teams generate 2-3 second bursts. This offers much more control over the motion and reduces the likelihood of structural drift.

- Asset Conditioning: Use Image to Video tools as one part of a larger chain. This might involve cleaning up the source image in Photoshop first or using an AI upscaler after the video is generated to fix resolution issues.

- Human-in-the-Loop Curation: Speed-focused teams often automate the posting of content. A control-focused team has an editor who reviews every frame for “glitches” that the AI might not see as errors but a human eye will find jarring.

The Limitation of Current Physics Engines

It is important to reset expectations regarding what these tools can actually do. While we see incredible demos online, those are often the result of hundreds of attempts. In a real-world production environment, you cannot rely on “getting lucky.”

Current Image to Video technology is exceptional at atmospheric movement—clouds, hair blowing, slow pans, and lighting shifts. It is notably less reliable at specific, directed actions like a character typing on a keyboard or a specific product interaction. When a workflow ignores these limitations in favor of speed, the result is usually a “uncanny valley” effect that can actually damage a brand’s credibility.

Operational Shift: From Creators to Curators

The final mistake many teams make is failing to change their internal roles. In a traditional workflow, the “maker” spends 90% of their time creating and 10% reviewing. In an AI-driven workflow, that ratio should almost flip. The operator becomes a curator and a refiner.

When you prioritize control, you accept that the AI is a collaborator with its own “opinions” on movement. By slowing down to analyze the seeds, the motion buckets, and the prompt weights, you gain the ability to replicate success. Speed-first teams rarely document their successes; they just move to the next prompt. Control-first teams build a library of “proven” prompts and image types that consistently yield high-quality Photo to Video results.

Ultimately, the goal of using AI in video production shouldn’t be to see how fast you can hit “Generate.” It should be to see how much more quality you can extract from each creative idea. By shifting the focus from throughput to intentionality, teams can finally bridge the gap between AI experimentation and professional-grade production. The paradox is that the most “productive” teams are often those who are willing to slow down and master the nuances of the tool, rather than those who are simply racing to the finish line.